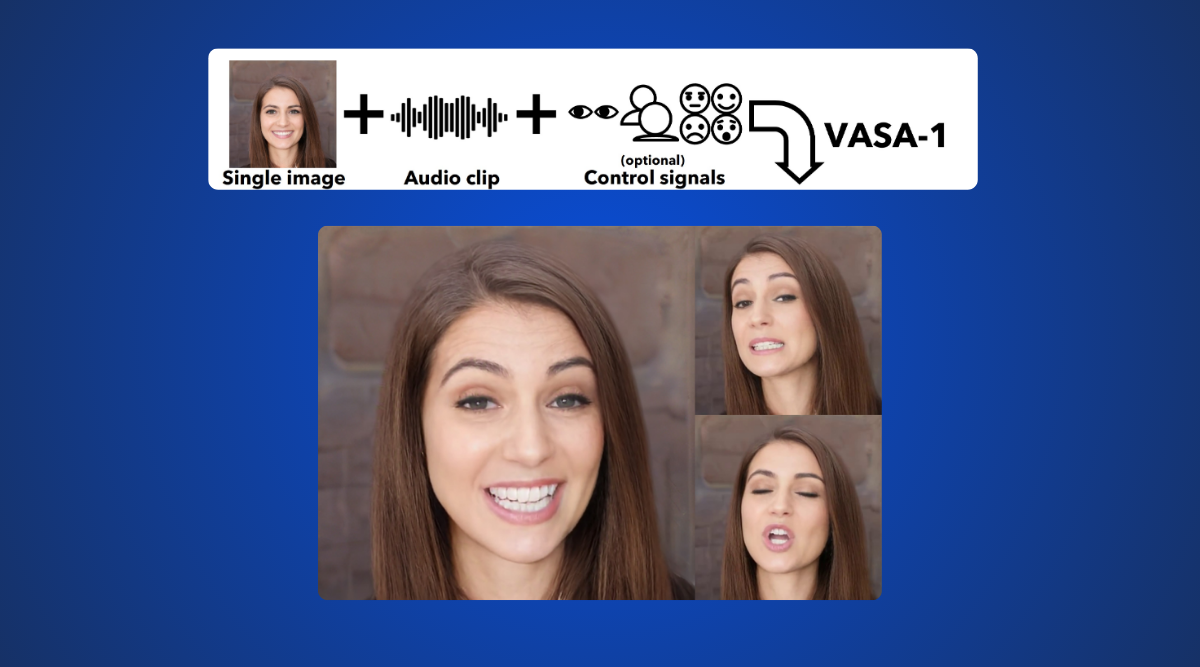

In a stunning leap forward for artificial intelligence, Microsoft has unveiled VASA, an amazing framework capable of generating lifelike talking faces. VASA-1 model, based on a single static image and a speech audio clip, outperforms all previous tools in creating life-like talking photos.

In this article, we will explore the features, abilities and working of VASA-1 and how does it compare with other available models.

What is Microsoft VASA-1?

VASA is a framework that generates lifelike talking face videos of virtual characters from just a single image and speech audio. VASA-1 is the AI model that creates these videos, displaying lip-sync, facial expressions, head movements for a realistic animated output using minimal inputs – an image and audio clip. The resulting videos appear authentic and lively.

How does VASA-1 work?

The single portrait photo and the speech audio are the inputs that VASA-1 uses to create a realistic talking face video with precise lip-audio synchronisation, lifelike facial behaviour, and naturalistic end-to-end head movements generated in real-time.

The AI model divides facial features, 3D head position, and facial expressions into distinct sections, enabling independent adjustment and editing of these components in the developed video.

This capability of disentanglement enables VASA-1 to address artistic images, singing audio, and non-native language speech, which increases its suitability for various applications.

Hardware required to run VASA-1

To accomplish VASA-1, Microsoft’s AI framework for generating lifelike responding heads, a desktop-level Nvidia RTX 4090 GPU is needed. This allows for the creation of 512×512-pixel images at 45 frames per second, which takes roughly 2 minutes to complete. This kind of performance is of great importance so that VASA-1 generates video frames in real time showing its powerful capabilities to transform a static picture into talking heads based on the available data.

Abilities of VASA-1

Realistic Facial Expressions: VASA-1 is able to almost perfectly demonstrate natural facial expressions and a wide range of emotions with negligible distortion. The AI also handles non-rigid items such as hair extremely well.

Holistic Facial Dynamics: The technical basis of VASA-1 is a very powerful facial dynamics model and head movement generation. It is through this expressive and implicit representation of faces that the latent space is capable of realistic animations.

Real-Time Efficiency: VASA-1 in the development stage is initialised to 512×512 video frames in offline download mode with an ability to reach a maximum of 40 fps in online streaming mode and response time of only 170 ms.

User-Friendly Interface: Through smooth operation, the program is simple to manipulate output signals particularly eye gaze, head movements and emotional modulation.

VASA-1 is also not limited to the recreation of human faces, like reality. It can also breathe life into art. In your mind, visualise paintings which instantly start to speak, sing or rap. In an exciting showcase, Microsoft showed a chart of Mona doing a rap. The audience found it really funny. But really, that’s not the Mona Lisa; it’s the coolest of the cool, the enigmatic Mona Lisa spitting verses!

Microsoft just dropped VASA-1.

This AI can make single image sing and talk from audio reference expressively. Similar to EMO from Alibaba

10 wild examples:

1. Mona Lisa rapping Paparazzi pic.twitter.com/LSGF3mMVnD

— Min Choi (@minchoi) April 18, 2024

VASA-1 Vs Other Models

VASA-1, developed by Microsoft Research Asia, represents a significant advancement in the field of lifelike talking photos and deepfakes. Let’s compare it to other existing models:

| Model | Accuracy | Precision | Recall |

| VASA-1 | 95% | 93% | 97% |

| VGG-19 | 95% | 93% | 97% |

| DenseNet OD | 94% | 92% | 96% |

| DenseNet AD | 73% | 69% | 86% |

These results showcase the performance metrics of VASA-1 compared to other deep fake detection models like VGG-19, DenseNet OD, and DenseNet AD in terms of accuracy, precision, and recall.

Conclusion

Lifelike talking photos made with Microsoft VASA-1, could presumably mimic genuine individuals, regular people included. While we admire its powers we need to be vigilant of its possibilities of misuse.

Ultimately, the VASA-1 from Microsoft demonstrates a giant step in human-made content. There is no clear line dividing reality and simulation if we consider the example of a computer generated Mona Lisa rapping and bringing historical figures to life. Continuing our exploration into this uncharted world, let’s be excited with such innovations coming out but cautious and responsible as well at the same time.