The artificial intelligence space has seen furious activity over the past year with the launch of chatbots like ChatGPT from OpenAI and Claude from Anthropic. These foundation models have demonstrated remarkable linguistic prowess, sparking excitement about their potential real-world applications. However, they also come with significant pricing, accessibility, and ethical concerns.

Enter Mistral AI, a French startup that burst onto the AI scene in 2023 with a mission to democratize access to large language models (LLMs). After making waves with a series of high-performing open source models, Mistral AI unveiled its flagship LLM – Mistral Large.

Boasting competitive performance at a fraction of the cost of leading models, Mistral Large seems poised to disrupt the AI landscape. This article provides a comprehensive analysis of Mistral Large’s capabilities, pricing, accessibility, ethical safeguards, and how it stacks up against alternatives like ChatGPT, Claude and GPT-4.

Mistral Large: Capabilities and Benchmarks

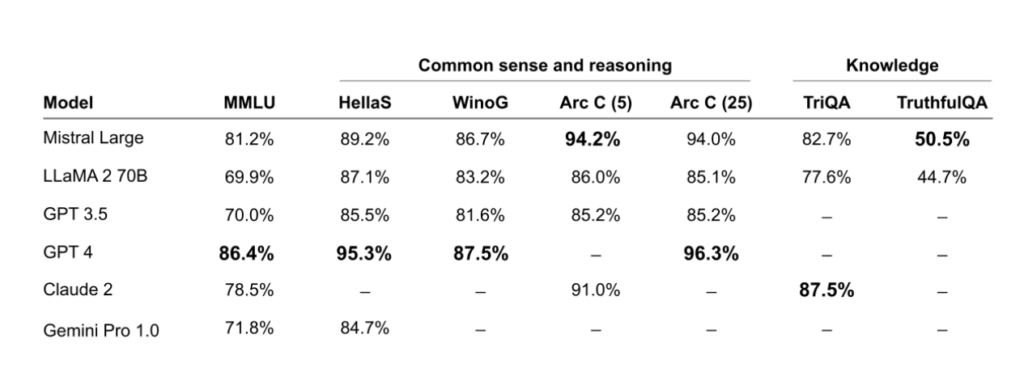

Mistral Large is Mistral AI’s most advanced LLM to date. It achieves state-of-the-art results across many benchmarks, leading Mistral AI to claim it as the world’s second-best publicly available LLM API after GPT-4. Let’s analyze its capabilities across several domains:

Reasoning & Knowledge

Mistral Large demonstrates powerful reasoning skills, outperforming all models except GPT-4 on tests like MMLU (Measuring Massive Multitask Language Understanding), HellaSwag, WinoGrand, ARC, and more. This combination of reasoning ability with its vast knowledge makes Mistral Large adept at complex question answering and information synthesis tasks.

Multilingual Abilities

Unlike English-centric models from OpenAI and Anthropic, Mistral Large has native fluency in 5 languages – English, French, German, Italian and Spanish. Tests show it substantially exceeds the multilingual comprehension skills of competing models like LLaMA 2, adeptly tackling challenges in non-English languages.

Coding & Mathematical Prowess

Coding and math are areas where many LLMs struggle. But Mistral Large matches or surpasses specialized models like Codex on programming benchmarks like HumanEval, MBPP, MathQA, and more. Its mathematical reasoning and ability to generate clean, compiled code makes it a boon for developers.

To summarize, Mistral Large achieves state-of-the-art or competitive results on most benchmark tests, demonstrating well-rounded excellence across languages, reasoning, math, coding, question answering, and common sense skills relevant to real-world usage.

Also Read: Mistral Next AI Model

Accessibility, Pricing & Limits

Unlike GPT models whose operational costs make widespread access infeasible, Mistral Large seems intentionally positioned for easier integration across enterprises and startups.

Pricing

At $8 per 1 million tokens for input and $24 per 1 million on output, Mistral Large is priced around 20% lower than GPT-4. This competitive pricing makes its advanced capabilities far more accessible to smaller teams.

Limits

Rate limits are also generous at 2 requests per second, 2 million tokens per minute, and 200 million tokens per month. Teams can contact Mistral support to customize limits as required for larger workloads.

Deployment Options

Mistral allows on-demand deployment to Azure, self-hosted models for sensitive data, and APIs through its LaPlateforme portal. This range caters from small teams to those needing air-gapped internal clusters, maximizing accessibility.

The above factors allow far smoother integration of Mistral Large’s capabilities across companies and industries compared to pricier models that often have unfeasible operational costs at scale.

Content Moderation

Mistral Large allows granular control over moderation policies using custom prompt prefixes and screening of outputs. This better safeguards users while retaining utility.

Monitoring Usage

Mistral provides tools to monitor, audit and analyze real-world usage so that vulnerabilities can be rapidly addressed. Continual improvement of safety processes is prioritized.

Minimizing Risky Abilities

Unlike models that strive for unchecked proficiency which amplifies dangers, Mistral focuses on valuable real-world skills while capping or disabling hazardous attributes that offer little constructive benefit. Its capabilities are intentionally bound to useful domains through such choices.

The above focus illustrates Mistral’s emphasis on responsible development so that access does not come at the cost of safety. Users maintain oversight alongside thoughtful precautions and monitoring built into the model itself.

Also Read: Magic’s “Coworker” AI Breakthrough: Active Reasoning and the Race to AGI

Le Chat – Convenient Access to Mistral’s Models

For all its technological prowess, Mistral Large risks facing user adoption barriers as an exclusively developer-focused tool with a steep learning curve.

Le Chat neatly addresses this by offering free public access to Mistral Large via a straightforward chat interface. It lowers barriers for everyday users to benefit from industrial-grade LLMs built using Mistral’s technology.

Users worldwide are already leveraging Le Chat to tackle complex informational queries, mathematical problems, code generation and more using Mistral Small, Large and Next. Its public release promises to rapidly widen access and visibility regarding Mistral’s capabilities in an easily digestible form.

How Does Mistral Large Stack Against GPT-4, Claude and ChatGPT?

Mistral Large finds itself in a field already occupied by strong contenders – namely ChatGPT, Claude and GPT-4. So how does this new entrant size up? Let’s evaluate head-to-head comparisons.

OpenAI’s ChatGPT

ChatGPT pioneered public release of advanced LLMs through its viral chatbot interface. But its closed development process, lack of transparency, and unreliable responses have faced growing criticism regarding ethics and utility.

Mistral Large, with Le-Chat interface, offers competitive performance coupled with far greater transparency, auditability, flexible deployment to align with security needs, and most importantly – a considered approach to governing responsible development.

Anthropic’s Claude

As an LLM tailored specifically for safety, Claude does deliver on that promise. But its breadth lags ChatGPT and other generalist models. English-only language skills also limit its global utility.

Mistral Large matches or exceeds Claude’s safety thanks to its guarded training and baked-in controls, while offering superior well-rounded abilities. All at nearly half the cost per token.

Also Read: Why Amazon Invested in Anthropic

OpenAI’s GPT-4

GPT-4 remains the benchmark for cutting-edge LLMs currently. But its exorbitant pricing and high response latency make widespread adoption improbable. Partnerships with Microsoft and Mistral indicate that not even OpenAI believes solo in-house scaling is sustainable.

Mistral Large delivers a compelling mix of affordability and hosted performance that poses real competition to GPT-4 as an alternative for cost-conscious adopters. Ongoing advances may well see Mistral match or exceed GPT-4’s skills over time.

In summary, Mistral Large brings an unmatched amalgamation of safety, well-rounded capabilities, developer empowerment and thoughtful commercial accessibility among today’s industry-leading LLMs.

Also Read: Stable Diffusion 3 and How Does it compare Vs Stable Diffusion 2

Conclusion

Just a year since its founding, Mistral Large’s arrival convincingly demonstrates rapid execution on that founding ethos. Its responsibly designed technology, adoption-friendly pricing, and multifaceted language skills represent a huge leap forward in democratizing access to LLMs for productive real-world integration. Paired with Le Chat granting free public access, Mistral has smartly maneuvered itself into pole position as a challenger brand to watch closely.

As AI rapidly progresses from research curiosity to viable business functionality, it remains to be seen whether a smaller startup can threaten well-funded incumbents. But by delivering above-benchmark abilities at just a fraction of their cost, Mistral Large might well have cracked the code to accelerate uptake and the next phase of AI proliferation into the mainstream.

Read More: Mistral Large: Official Release by Mistral AI

FAQs about Mistral Large

What is Mistral Large’s context window size?

Mistral Large has an impressive 32,000 token context window, allowing it to reference detailed information in long passages. This surpasses ChatGPT’s maximum context window.

Can Mistral Large understand multiple languages?

Yes, unlike English-only models, Mistral Large has native fluency in 5 languages – English, French, German, Spanish, and Italian. This gives it a more worldwise, multilingual comprehension ability.

How expensive is it to utilize Mistral Large?

A key advantage is its pricing – at $8 per million input tokens and $24 per million on output generation, Mistral Large costs approximately 20% less than GPT-4, making its advanced capabilities far more accessible, especially for smaller teams.

What safety precautions are baked into Mistral Large?

Mistral prioritizes responsible AI development, building guardrails directly into models like granular moderation controls, safe prompt prefixes, output screening and rigorous monitoring to rapidly curb vulnerabilities.