Google unveiled Gemma on 21st Feb 2024. Google Gemma is a cutting-edge family of open-source AI language models. This move by Google echoes the growing trend towards democratizing AI, following the footsteps of OpenAI’s ChatGPT frenzy in 2022.

I tried the conversational AI based on Google Gemma and I feel its much faster than Gemini. While I cannot comment on the accuracy of the outputs, the quality of content it provides is almost that of Gemini’s.

Let’s understand in detail what is Google Gemma and how you can use it?

What is Google Gemma?

Google Gemma represents a paradigm shift in AI accessibility. Google Gemma is a family of open-source large language models (LLMs) developed by Google DeepMind and other teams within Google. It’s based on the technology used to create the powerful Gemini models, but designed to be more lightweight and accessible for a wider range of users and applications.

Google Gemma Models

Google has launched Gemma in 2 sizes – Gemma 2B and Gemma 7B. Each size has pre-trained and instruction-tuned variants. These models are lightweight and built from the same research and technology used to create the larger Gemini models.

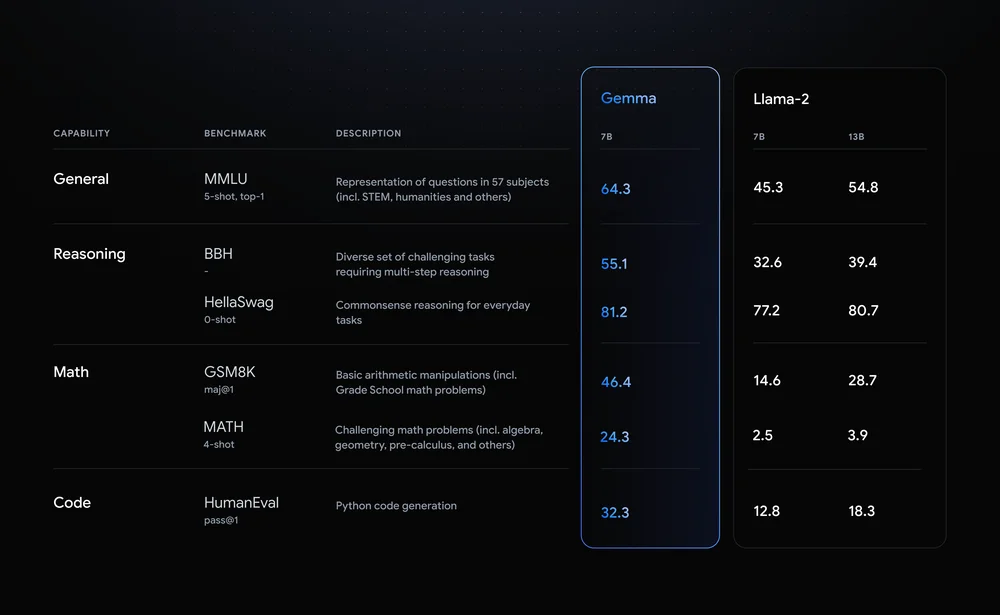

Gemma surpasses significantly larger models on key benchmarks while adhering to rigorous standards for safe and responsible outputs.

Here is a comparison of Gemma and Llama-2 (Meta’s AI model) that Google shared:

How to use Google Gemma?

If you are a tech enthusiast but do not have development knowledge, you can try out Gemma on HuggingChat directly as a conversational AI.

Developers can access pre-trained and instruction-tuned variants of Gemma, catering to different computational environments and application needs. With seamless integration across popular frameworks and platforms including:

- Colab: Use Colab notebooks for research and development.

- Kaggle: You can access Gemma for free on Kaggle.

- Google Cloud: Gemma is available on Google Cloud through Vertex AI and Google Kubernetes Engine (GKE).

- Hugging Face: You can access Gemma’s repository through Hugging Face.

- NVIDIA NeMo: Gemma can be accessed via NVIDIA’s NeMo

Ready-to-use Colab and Kaggle notebooks are available to help you start using Gemma. Toolchains for inference and supervised fine-tuning (SFT) are provided across major frameworks: JAX, PyTorch, and TensorFlow through native Keras 3.0.

Gemma models can run on your laptop, workstation, or Google Cloud, with easy deployment options.

Features of Google Gemma

- Model Variants: As mentioned before, Gemma comes in two sizes: Gemma 2B and Gemma 7B. Each size has pre-trained and instruction-tuned variants. These lightweight models are built from the same research and technology used to create the larger Gemini models.

- Responsible AI Toolkit: Gemma includes a Responsible Generative AI Toolkit. This toolkit provides guidance and essential tools for creating safer AI applications with Gemma.

- Framework Support: Toolchains for inference and SFT are provided across major frameworks like JAX, PyTorch, and TensorFlow through native Keras 3.0. Integration with popular tools such as Hugging Face, MaxText, NVIDIA NeMo, and TensorRT-LLM makes it easy to get started with Gemma.

- Deployment Flexibility: Pre-trained and instruction-tuned Gemma models can run on your laptop, workstation, or Google Cloud. Deployment options include Vertex AI and Google Kubernetes Engine (GKE).

- Performance and Safety: Gemma models share technical components with Gemini, Google’s largest AI model. Despite their smaller sizes, Gemma 2B and 7B achieve best-in-class performance compared to other open models.

Conclusion

The biggest reason for Google to release Gemma was to make their technology open source and help developers and researchers to understand it better. However, Gemma is going to be used in a lot of areas like Education, Content creation, development, and automating a lot of work because of its lightweight and powerful performance.